This is a blog I have wanted to write for quite some time. It addresses what I believe to be the single most important issue when modeling information for legal informatics. It is also, I believe, the most urgent aspect that we need to agree upon in order to promote legal informatics as a real emerging industry. Today, most jurisdictions are simply cobbling together short term solutions without much consideration to the big picture. With something this important, we need to look at the big picture first and come up with a lasting solution.

Citations, references, or links are a very important aspect of the law. Laws are inherently a web of interconnections and interdependencies. Correctly resolving those connections allows us to correctly interpret the law. Mistakes or ambiguities in how those connections are made is completely unacceptable.

I work on projects around the world as well as my work on the OASIS LegalDocumentML technical committee. As I travel to the four corners of the Earth, I am starting to see more clearly how this problem can be solved in a clean and extensible manner.

There are, of course, already many proposals to address this. The two I have looked at the most are both from Italy:

• A Uniform Resource Name (URN) Namespace for Sources of Law (LEX)

• Akoma Ntoso References (in the process of being standardized by OASIS)

My thoughts derive from these two approaches, both of which I have implemented in one way or another, with varying degrees of success. My earliest ideas were quite similar to the LEX-URN proposal by being based around URNs. However, with time Fabio Vitali at the University of Bologna has convinced me that the approach he and Monica Palmirani put forth with Akoma Ntoso using URLs is more practical. While URNs have their appeal, they really have not achieved critical mass in terms of adoption to be practical. Also, the general reaction I have gotten with LEX-URN encoded references has not been positive. There is just too much special encoding going on within them for them to be readable by the uninitiated.

Requirements

Before diving into this subject too deep, let’s define some basic requirements. In order to be effective, a reference must:

• Be unambiguous.

• Be predictable.

• Be adaptable to all jurisdictions, legal systems, and all the quirks that arise.

• Be universal in application and reach.

• Be implementable with current tools and technologies.

• Be long lasting and not tied to any specific implementation

• Be understandable to mere mortals like myself.

URI/IRI

URIs (Uniform Resource Identifiers) give us a way to identify resources in a computing system. We’re all familiar with URLs that allow us to retrieve pages across the web using hierarchical locations. Less well known are URNs which allow us to identify resources using a structured name which presumably will then be located using some form of a service to map the name to a location. The problem is, a well-established locating service has never come about. As a result, URNs have languished as an idea more than a tool. Both URLs and URNs are forms of URIs.

IRIs are a generalization of URIs to allow characters outside of the ASCII character set supported by normal URIs. This is important in jurisdictions that use more complex character than ASCII supports.

Given the current state of the art in software technology, basing references on URIs/IRIs makes a lot of sense. Using the URL/IRL variant is the safer and more universally accepted approach.

FRBR

FRBR is the Functional Requirements for Bibliographical Records. It is a conceptual entity-relationship model developed by librarians for modeling bibliographic information in databases. In recent years it has received a fair amount of attention for use as the basis for legal references. In fact, both the LEX-URN and the Akoma Ntoso models are based, somewhat loosely, on the model. At times, there is some controversy as to whether this model is appropriate or not. My intent is not to debate the merits of FRBR. Instead, I simply want to acknowledge that it provides a good overall model for thinking about how a legal reference should be constructed. In FRBR, there are four main entities:

1. Work – The work is the “what”, allowing us to specify what it is that we are referring to, independent of which version or format we are interested in.

2. Expression – The expression answers the “from when” question, allowing us to specify, in some manner, which version, variant, or time frame we are interested in.

3. Manifestation – The manifestation is the “which format” part, where we specify the format that we would like the information returned as.

4. Item – The item finally allows us to specify the “from where” part, when multiple sources of the information are available, that we want the information to come from.

That’s all I want to mention about FRBR. I want to pick up the four concepts and work from them.

What do we want?

Picking up the Akoma Ntoso model for specifying a reference as a URL, and mindful of our basic requirements, a useful model to reference a resource is as a hierarchical URL, starting by specifying the jurisdiction and then working hierarchically down to the item in question.

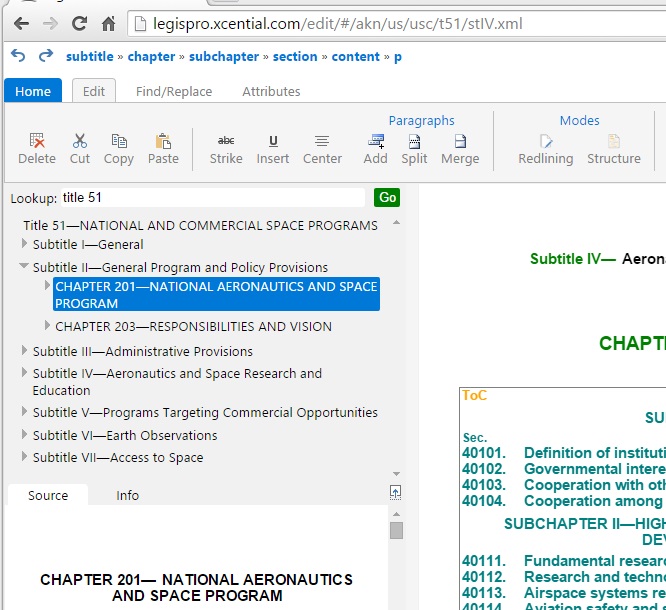

This brings me to the biggest hurdle I have come across when working with the existing proposals. It’s not terribly clear what a reference should be like when the item being referenced is a sub-part of a resource being modeled as an XML document. For instance, how would I refer to section 500 of the California Government Code? Without putting in too much thought, the answer might be something like /us-ca/codes/gov.xml#sec500, using a URL to identify the Government Code followed by a fragment identifier specifying section 500 of the Government Code. The LEX URN proposal actually suggests using the # fragment identifier, referring to the fragment as a partition. There are two problems with this solution though. First, any browser will interpret a reference using the fragment identifier as two parts – the part before the # fragment identifier showing the resource to be retrieved from the server and the part after the fragment identifier as an “id” to the item to scroll to. Retrieving the huge Government code when all we want is the one sentence in Section 500 is a terrible solution. The second problem is that it defines, possibly for all time, how a large document might have been constructed out of sub-documents. For example, is the US Code one very large document, does it consist of documents made out of the Titles, or as it is quite often modeled, is every section a different document? It would be better if references did not capture any part of this implementation decision. A better approach is to allow the “what” part of a reference to be specified as a virtual URL all the way down to whatever is wanted, even when the “what” is found deep inside an XML document in a current implementation. For example, the reference would better be specified as /us-ca/codes/gov/sec500. We’re not exposing in the reference where the document boundaries currently exist.

On to the next issue, what happens when there is more than one possible way to reference the same item? For example, the sections in California’s codes, as is usually the case, are numbered sequentially with little regard to the heading hierarchy above the sections. So a reference specified as /us-ca/codes/gov/sec500 is clear, concise, and unambiguous. It follows the manner in which sections are cited in the text. But /us-ca/codes/gov/title1/div3/chap6/sec500 is simply another way to identify the exact same section. This happens in other places too. /us-ca/statutes/2012/chap5 is the same document as /us-ca/bills/2011/sb730. So two paths identify the same document. Do we allow two identities? Do we declare one as the canonical reference and the other as an alternate? It’s not clear to me.

What about ambiguity? Mistakes happen and odd situations arise. Take a look at both Chapter 14s that exist in Division 6 of Title 1 of the California Government Code. There are many reasons why this happens. Sometimes it’s just a mistake and sometimes it’s quite deliberate. We have to be able to support this. In California, we disambiguate by using “qualifying language” which we embed somehow into the reference. The qualifying language specifies the last statute to create or amend the item needing disambiguation.

The From When do we want it?

A hierarchical path identifies, with some disambiguation, what it is we want. But chances are that what we want has varied over time. We need a way to specify the version we’re looking for or ask for the version that was valid at a specific point in time. Both the LEX URN and the Akoma Ntoso proposals for references suggest using an “@” sign around some nomenclature which identifies a version or date. (The Akoma Ntoso proposal adds the “:” sign as well)

A problem does arise with this approach though. Sometimes we find that multiple versions exist at a particular date. These versions are all in effect, but based on some conditional logic, only one might be operational at a particular time. How one deals with operational logic can be a bit tricky at times. That’s an open issue to me still.

Which Format do we want?

I find specifying the format to be relatively uncontroversial. The question is whether we specify the format using well established prefixes such as .pdf, .odt, .docx, .xml, and .html or whether we instead try to be more precise by embedding or encoding the MIME type into the reference. Personally, I think that simple extensions, while less rigorous and subject to unfortunate variations and overlaps, offer a far more likely to be adopted approach than trying to use the MIME type somehow. Simple generally wins over rigorous but more complex solutions.

The From Where should it come?

This last part, the from where should it come part, is something that is often omitted from the discussion. However, in a world where multiple libraries offering the same resource will quite likely exist, this is really important. Let’s take a look at the primary example once more. We want section 500 of the California Government Code. The reference is encoded as /us-ca/codes/gov/sec500. Where is this information to come from? Without a domain specified, our URL is a local URL so the presumption is that it will be locally resolved – the local system will find it, somehow. What if we don’t want to rely on a local resolution function? What if there are numerous sources of this data and we want to refer to one of them in particular. When we prepend the domain, aren’t we specifying from where we want the information to come from? So if we say http: //leginfo.ca.gov/us-ca/codes/gov/sec500, aren’t we now very precisely specifying the source of the information to be the official California source? Now, say the US Library of Congress decides to extend Thomas to offer state legislation. If we want to specify that copy, we would simply construct a reference as http: //thomas.loc.gov/us-ca/codes/gov/sec500. It’s the same URL after the domain is specified. If we leave the URL as simply /us-ca/codes/gov/sec500, we have a general reference and we leave it to the local system to provide the resolution service for retrieving and formating the information. We probably want to save references in a general fashion without a domain, but we certainly will need to refer to specific copies within the tools that we build.

Resolvers

The key to making this all work is having resolvers that can interpret standardized references and find a way to provide the correct response. It is important to realize that these URLS are all virtual URLs. They do not necessarily resolve to files that exist. It is the job of the resolving service to either construct the valid response, possibly by digging into database and files, or to negotiate with other resolvers that might do all or part of the job of providing a response. For example, imagine that Cornell University offers a resolver at http: //lii.cornell.edu. It might, behind the scenes, work with the official data source at http: //leginfo.ca.gov to source California legislation. Anyone around the world could use the Cornell resolver and be unaware of the work it is doing to source information from resolvers at the official sources around the world. So the local system would be pointed to the Cornell service and when the reference /us-ca/codes/gov/sec500 arose, the local system would defer to the LII service for resolution which in turn would defer to California’s official resolver. In this way, the resolvers would bear the burden of knowing where all the official data sources around the world are located.

Examples

So to end, I would like to sum up with some examples:

[Note that the links are proposals, using a modified and simplified form of the Akoma Ntoso proposal, rather than working links at this point]

/us-ca/codes/gov/sec500

– Get section 500 of the California Government Code. It’s up to the local service to decide where and how to resolve the reference.

http: //leginfo.ca.gov/us-ca/codes/gov/sec500

– Get Section 500 of the California Government Code from the official source in California.

http: //lii.cornell.edi/us-ca/codes/gov/sec500

– Get Section 500 of the California Government Code from Cornell’s LII and have them figure where to get the data from

/us-ca/codes/gov/sec500@2012-01-01

– Get Section 500 of the California Government Code as it existed on January 1, 2012

/us-ca/codes/gov/sec500@2012-01-01.pdf

– Get Section 500 of the California Government Code as it existed on January 1, 2012, in a PDF format

/us-ca/codes/gov/title1/div3/chap6/sec500

– Get Section 500 of the California Government Code, but the fully hierarchy is specified

My blog has gotten very long and I have only just started to scratch the surface. I haven’t addressed multilingual issues, alternate character sets, and a host of other issues at all. It should already be apparent that this is all simply a natural extension of the URLs we already use, but with sophisticated services underneath resolving to items other than simple files. Imagine for a moment how the field of legal informatics could advance if we could all agree to something this simple and comprehensive soon.

What do you think? Are there any other proposals, solutions, or prototypes out there that addresses this? How does the OASIS legal document ML work factor into this?